Problem Statement

Not enough orders get reviewed by doctors. Therefore, Dandy currently relies solely on re-fabrication rates to understand what levers we need to pull to improve product quality. Refabrication rates is a lagging indicator for doctor dissatisfaction. We want to diversify our methods of understanding how we can improve product quality.

Our current feedback form in not very actionable. Our order ops team does not receive pertinent information to be able to ‘fix’ the problem.

We have 2 different feedback form experiences based on the software the doctor has access to. We were slowly but surely migrating our users to an improved platform called Chairside and creating a cohesive feedback experience was time sensitive.

How might we enable Dandy to pull actionable insights based on nuanced doctor input so we can improve product quality and reduce re-fabs from occurring in the first place?

Order Feedback

Working Group

Design Lead / Anna Kang

Product Lead / Shawn Israilov, Anusha Sthanunathan

Tech Lead / Robin Weaver

Tech / Sumukh Barve, Aram Krakirian

What the numbers are saying

YTD, only 12% of orders have reviews

34% of practices reviewed less than 5 lifetime orders

31% of Dandy practices have never reviewed an order

150 practices are responsible for 50% of all order reviews

What does success look like?

Increase the % of orders with reviews (aka Order Review Response Rate)

Increase the % of negative order reviews with actionable feedback

Reduce quality related churn

Iterative Solutions

Dandy practices learn by doing. Our initial goal was to increase the number of feedback. Then continue to partner with our order ops and cx team to refine what data we collect and evolve their SOP accordingly.

Original form

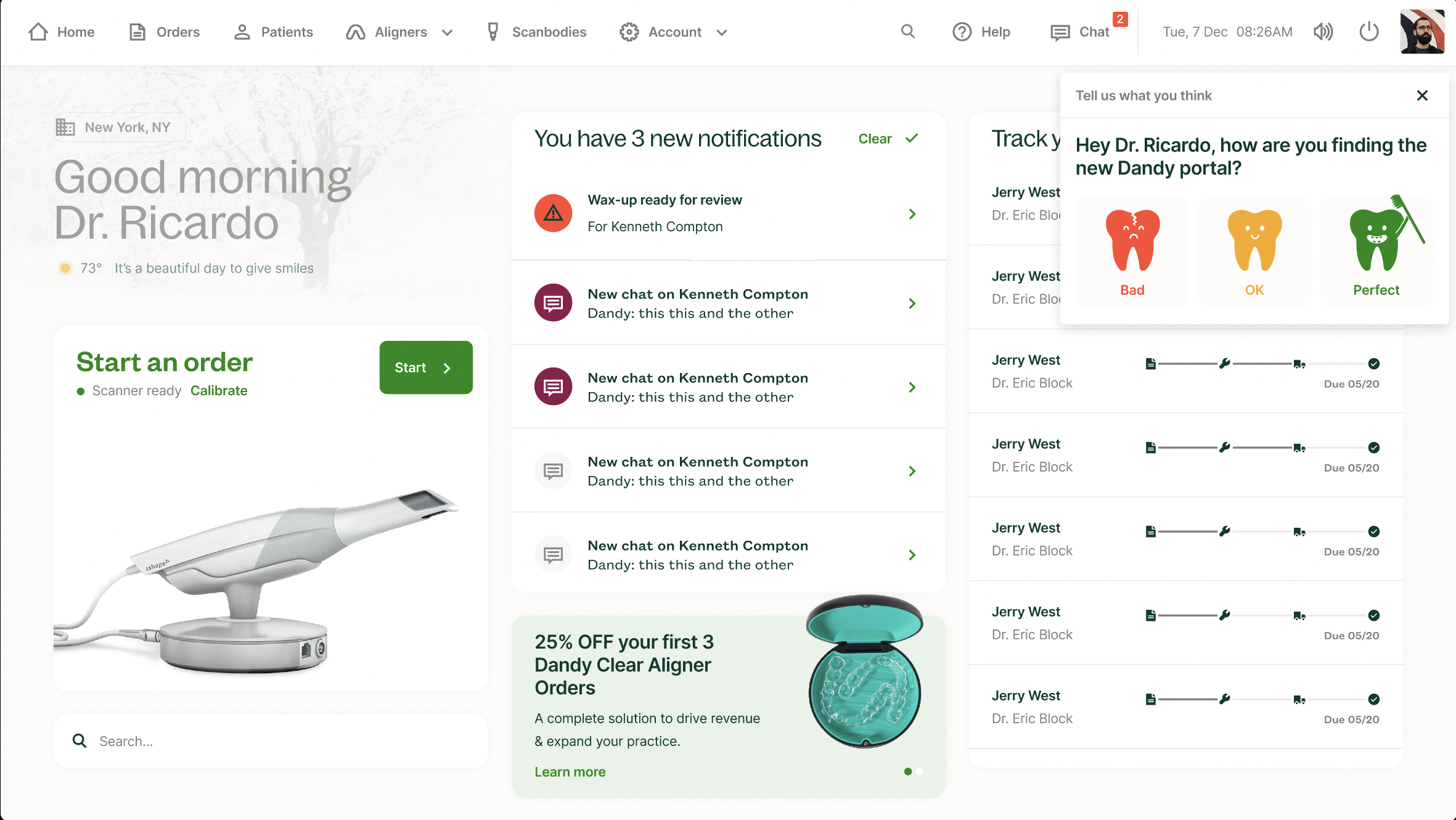

Redesign

Findings

Frustrated doctors submitting negative feedback felt mocked by the cartoonish satisfaction indicators.

The free form text field was difficult for our order ops team to consume and execute upon.

Regardless of which satisfaction indicator the doctor chose, we did not triage the feedback any differently.

Findings

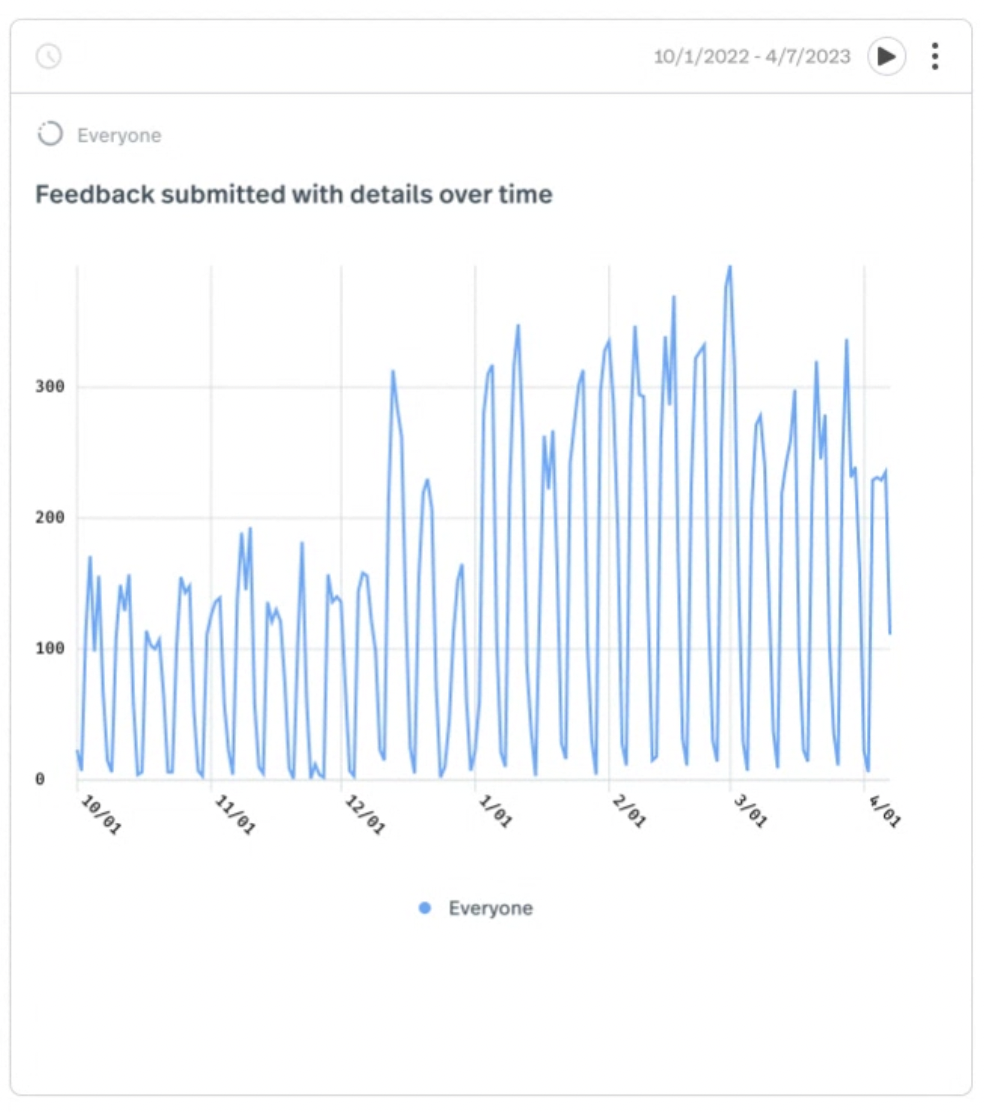

While more doctors (details below) were submitting feedback, we realized there was still a need to construct a more refined scale of measuring dissatisfaction to ensure we were able prioritize high risk doctors.

To cut scope, we released this redesign of the feedback form without looking at how it ties into the refabrication form which is a close cousin. This was our next step.

Results from this release

Doubled Order Review %

2.5 months post release and we've nearly doubled this metric from 10-11% weekly average to 18-21% mark on fully baked data, which is awesome!

Data baked so far validated our hypothesis. Making it easier to submit positive feedback has resulted in more feedback submissions.

More Practices are Leaving Reviews

18% increase in count of practices leaving reviews based on weekly count of order review.

Doctor approved

“Reviews are fabulous, they flow through. The workflow is excellent, it cannot be improved.”

Fast follow

While Dr Wilke narrates above that the feedback form ‘cannot be improved’ and we were flattered…we knew otherwise.

Next iteration (currently live).

New problem statement

Our methodology for measuring satisfaction was binary. We were boxing in doctors and forcing them to select from a very limited subset of issues that contradicted our dentistry-first image. For example, shade is the #1 quality issue for anteriors. Yet, we were only allowing doctors to express if its cloudy or if a wrong shade is used.

Our current process for capturing order feedback does not require order reviews to be submitted. In fact, the only entry point a doctor has to review an order is via the portal, which can be easily missed. Since our goal was to address new doctor feedback, we must incentivize the doctor to give us consistent doctor feedback on their first X orders placed and increase the number of touchpoints where we remind doctors to provide feedback.

How might we incentivize early adopters to leave feedback using a 1-5 satisfaction score to provide codified reasons for why the product was bad? We wanted to give doctors a set of codified responses that they expect to see from a dental lab when giving feedback AND allows operators to prioritize focus on what to triage and get to root cause faster (e.g. operations will prioritize triage for 1-star review over a 3-star review, and so on)